Google Meet shipped near-real-time speech translation on mobile this week. Microsoft launched real-time voice agents in Copilot Studio days earlier. Both announcements promise to dissolve language barriers during calls and video conferences. Both are being evaluated right now by Philippine BPO operations, hospitals, and government contact centers where agents routinely switch between Tagalog, English, Cebuano, Mandarin, and Japanese within a single shift.

The marketing sounds clean. The Philippine implementation reality is anything but.

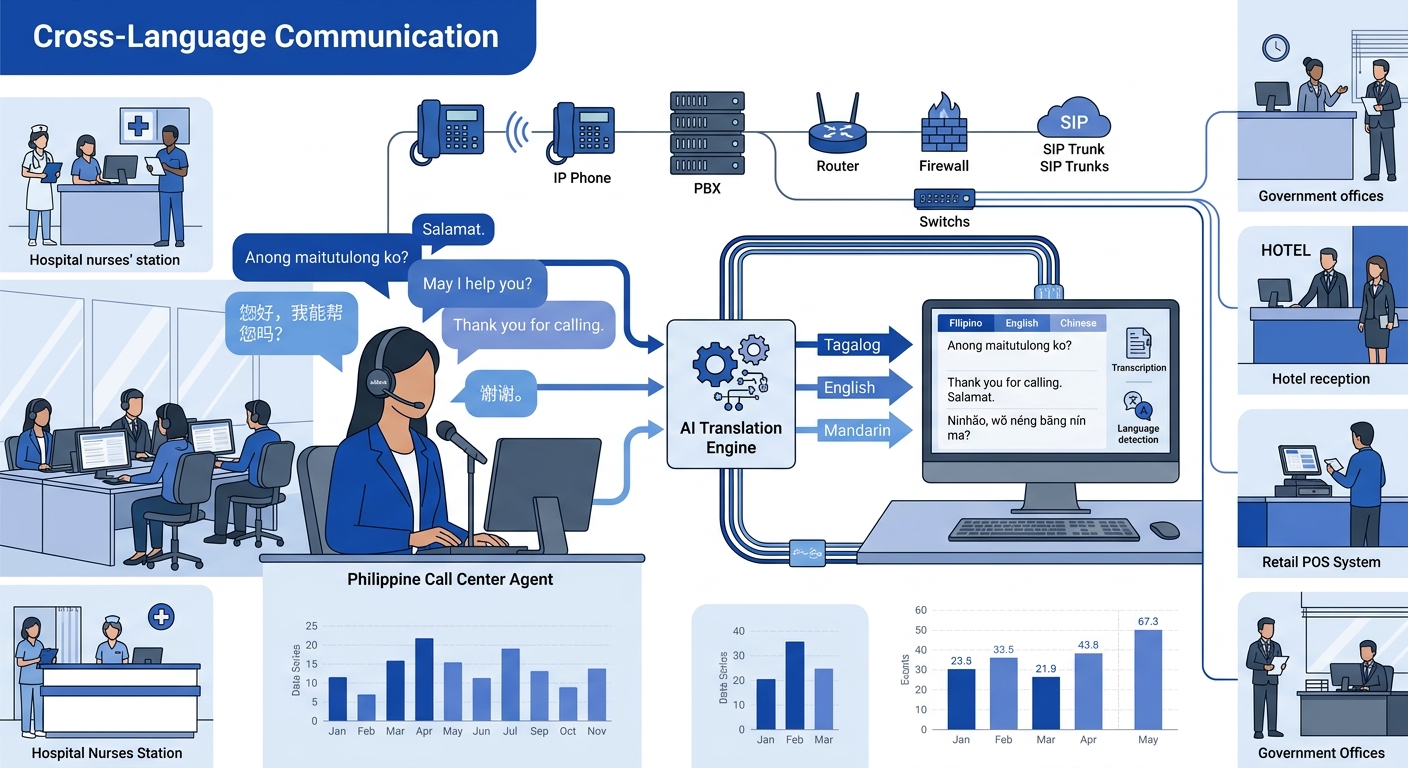

If you’re evaluating AI-powered VoIP translation for a Philippine deployment, whether that’s a 500-seat Makati call center or a provincial government hotline, you’re choosing between three distinct technical approaches. Each carries tradeoffs that vendor brochures skip over, and Philippine network latency makes every one of them harder than the demo suggests.

Cloud Translation APIs Layered on Top of Existing VoIP

This is the path of least resistance. Services like Wordly and Talo offer AI translation engines that sit alongside your existing phone system (Yeastar P-Series, Cisco UCM, or whatever PBX you’re running) and process audio streams through cloud-hosted models. Wordly advertises extensive language testing and glossary tools for domain-specific terminology. Talo uses a single AI bot that listens to every participant’s speech and translates without requiring separate accounts per language.

The appeal is obvious: you don’t rip out your existing telephony. You bolt on a translation layer. Some of these services claim support for 150+ languages and accents, including the kind of mixed-language speech that Filipino agents produce constantly.

Where this breaks in the Philippines

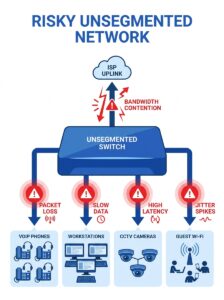

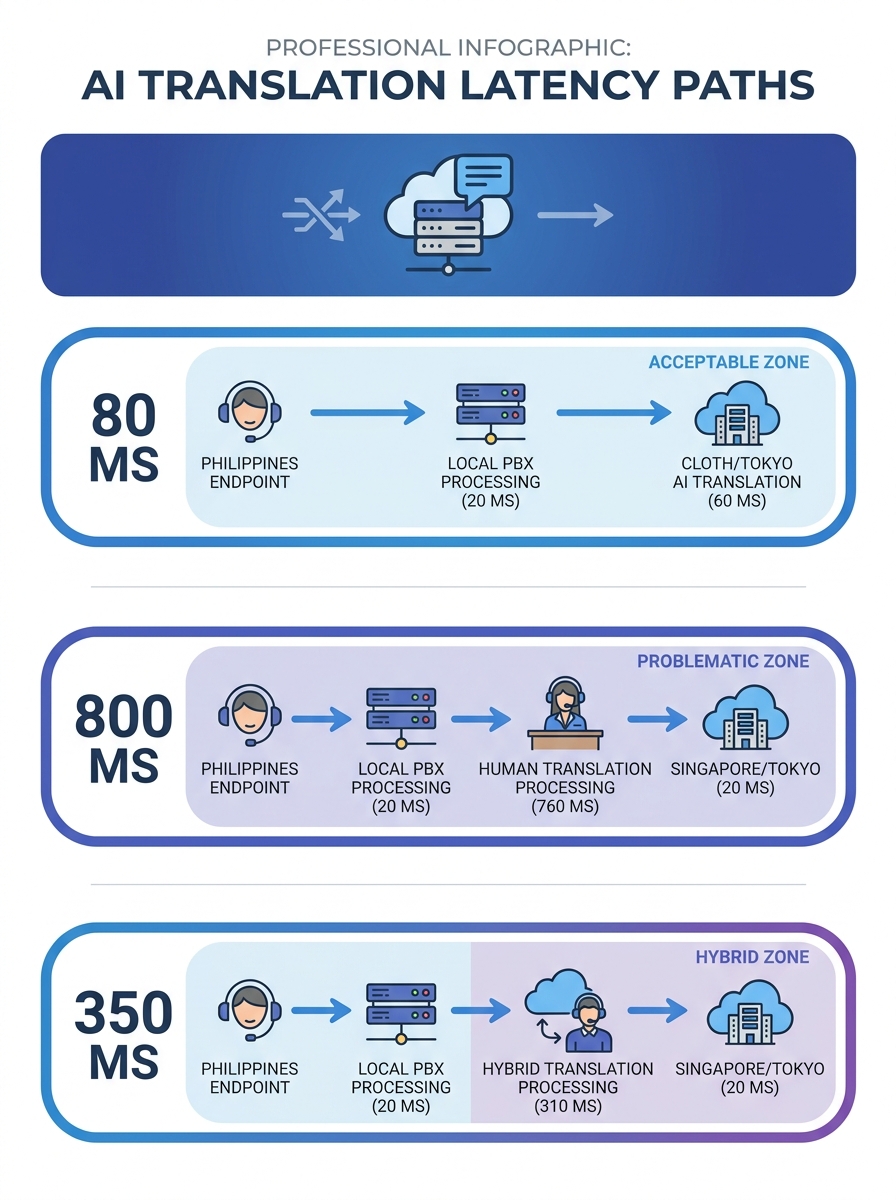

The fundamental problem is round-trip latency. Every audio frame has to travel from the handset to your PBX, from your PBX to the cloud translation API (usually hosted in Singapore, Tokyo, or US-West), get processed by the ASR engine, translated, synthesized back into speech, and returned. Philippine fixed broadband and mobile infrastructure adds meaningful delay at every hop. The country continues to lag in mobile internet speed compared to regional peers, and 5G coverage remains patchy outside Metro Manila and parts of Cebu.

Telecom insiders point to local government permitting bottlenecks that delay tower construction and congest existing infrastructure. That congestion directly affects real-time translation VoIP performance. When jitter spikes above 30ms or packet loss crosses 1%, cloud-based ASR accuracy drops hard. Research published in the International Journal of Speech Technology confirms that VoIP systems must function under low-connectivity conditions where bandwidth and jitter are unpredictable. Those are exactly the conditions many Philippine sites operate under daily.

Then there’s the speech recognition telephony challenge specific to Filipino callers. Non-native spoken-word recognition is measurably harder than native recognition, according to research from ACM’s distributed computing proceedings, especially in the presence of background noise. Filipino English is heavily accented, peppered with Tagalog insertions, and shaped by regional variation. A cloud API trained predominantly on American or British English corpora will struggle with a Davao-based agent saying “Ma’am, pasensya na po, paki-hold lang” mid-sentence.

When cloud APIs earn their cost

Cloud translation works best when you have reliable fiber connectivity (at least 20 Mbps symmetrical with sub-50ms latency to Singapore), your call volume is moderate (under 200 concurrent translated calls), and you’ve already configured QoS policies that prioritize voice traffic. If your network can’t guarantee those conditions, you’ll spend more time troubleshooting audio gaps than you save on translation.

When the Phone System Itself Handles Speech Recognition

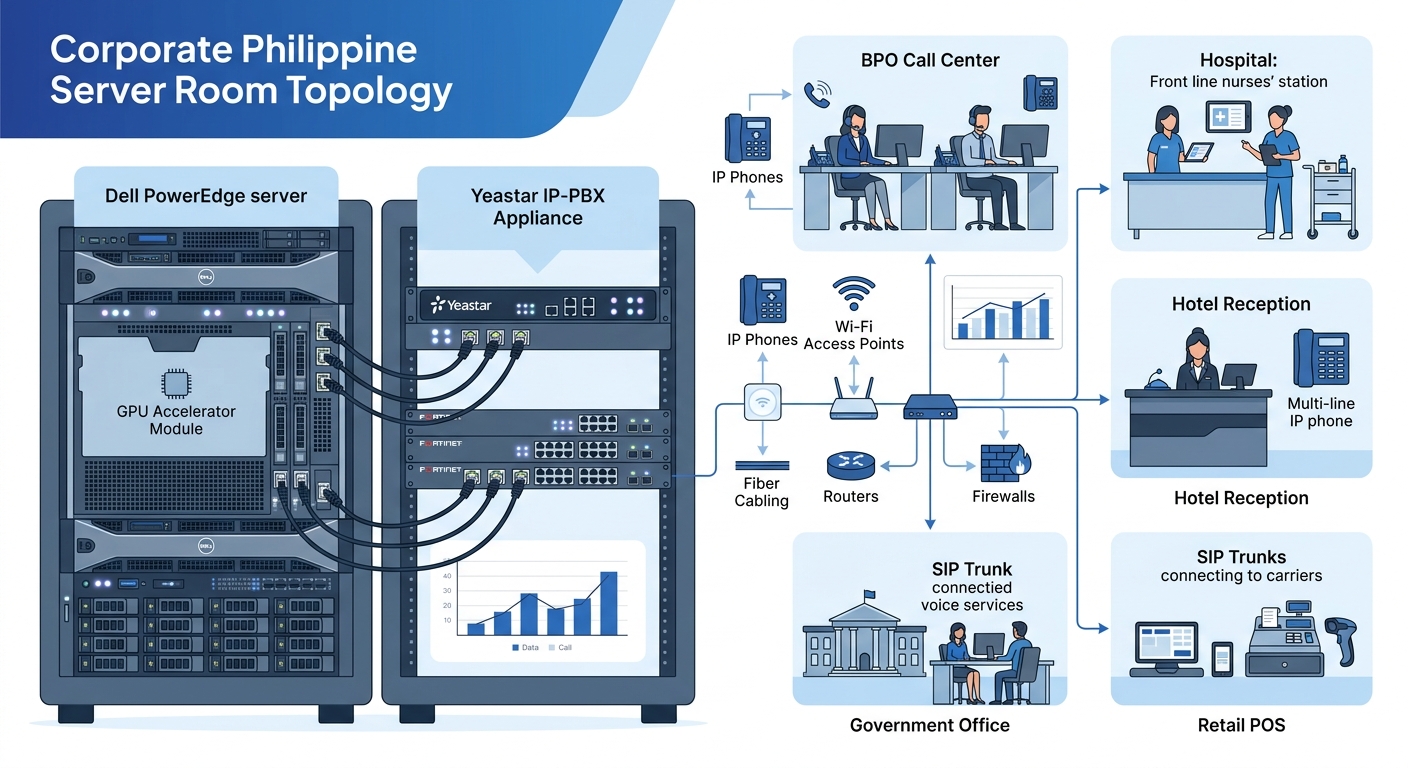

The second approach puts ASR directly into your PBX or UC platform. Cisco’s Webex AI Assistant, Microsoft Teams with Copilot, and some Yeastar configurations can run speech-to-text and basic translation functions closer to the edge, sometimes on the PBX appliance itself, sometimes on a local server paired with the phone system.

This reduces one critical variable: the audio doesn’t have to travel to a distant cloud for initial processing. If your Yeastar P-Series is sitting in the same server room as your agents, the ASR processing latency can drop below 200ms for speech-to-text alone. Translation still adds overhead, but you’ve eliminated the slowest leg of the round trip.

The Philippine-specific obstacles

Compute requirements are the first barrier. Running a local ASR model capable of handling Filipino-accented English, Tagalog, Cebuano, and Mandarin requires GPU-class hardware. A Dell PowerEdge server with an NVIDIA A30 or comparable accelerator costs PHP 800,000 to PHP 1.5 million depending on configuration, and you’ll need IT staff who can manage inference workloads. According to a recent Aon study, only 17 percent of Philippine employers can recruit and retain workers with AI skills. That shortage is acute in provincial cities where many BPO satellite offices and government contact centers operate.

Language model coverage is thinner than cloud offerings, too. Cloud APIs get continuous updates from millions of conversations. A locally deployed model is frozen at whatever version you installed, unless your team actively retrains it. Retraining on Filipino speech patterns requires labeled training data that barely exists in sufficient volume for languages like Pangasinan, Ilocano, or Hiligaynon.

But there’s a meaningful upside. Research from Frontiers in Signal Processing found that training ASR on network-distorted speech increased recognition accuracy by 82%. If you capture actual call recordings from your Philippine VoIP network, with all their compression artifacts, jitter-induced gaps, and codec distortions, and fine-tune your local model on that data, you’ll get substantially better recognition than any generic cloud API. This is the real advantage of embedded ASR: you can train it on your specific acoustic environment and build intelligent call systems tuned to your agents’ actual speech patterns.

Training ASR on network-distorted speech increased recognition accuracy by 82%. The acoustic reality of Philippine VoIP networks becomes a training advantage if you actually capture it.

When embedded ASR pays off

Large BPO operations with 500+ seats, dedicated IT teams, and the capital budget for GPU hardware. Organizations already running intelligent call routing that want to extend their AI investment into translation. Government agencies with data sovereignty requirements should pay close attention here: the Philippine government is targeting an AI governance framework within two months, and keeping voice data on-premise may become a regulatory advantage for agencies that handle sensitive citizen communications.

Regional Language Packs Built for Southeast Asian Code-Switching

The third path targets the specific linguistic problem that makes Philippine VoIP translation so difficult: code-switching. Filipino speakers don’t neatly separate languages. A customer service call might start in English, drift into Taglish (Tagalog-English mix), include a Bisaya aside to a colleague, and return to formal English for the resolution. All of that can happen within 90 seconds.

Standard ASR engines treat each language as a discrete mode. They detect one language, transcribe in that language, and translate from that language. Code-switching breaks this model because the language changes mid-sentence, sometimes mid-word.

Speechmatics addressed this directly with their Southeast Asia bilingual pack, designed specifically for the region’s multilingual reality. AirAsia built a multilingual conversational AI bot handling English, Mandarin, Malay, and Tamil that improved customer support efficiency by 25%. Philippine call centers already support Mandarin, Japanese, and Korean as the top Asian languages in the sector, making these packs an attractive fit for specific accounts.

The coverage problem

Speechmatics’ pack handles major Southeast Asian language pairs well. It doesn’t cover the long tail of Philippine languages that surface in government hotlines and provincial hospital switchboards. The Philippines has 175+ living languages. When a farmer in Leyte calls a DSWD hotline speaking Waray-Waray, no commercially available real-time translation VoIP product will handle that call accurately.

The bilingual packs still need network bandwidth to function. Whether they run in the cloud or on-premise, they add processing overhead to every call. If your site’s network infrastructure can’t sustain clean voice traffic without AI processing, adding a translation layer on top will degrade call quality for everyone.

Warning: Code-switching detection adds 150–400ms of processing latency per utterance beyond standard monolingual ASR. On a Philippine WAN link with existing 80–120ms latency to cloud endpoints, this pushes total delay past the 300ms threshold where callers start talking over each other.

When regional packs fit

Mid-size operations handling calls in 2–4 specific language pairs. Hotels in Makati or Cebu serving Korean and Japanese tourists. BPO teams supporting Asian language accounts where the language mix is predictable and the team can tolerate occasional misrecognition on heavily code-switched sentences. These packs give you better accuracy for your specific language pairs than a general-purpose 150-language cloud API, without the GPU hardware cost of a full embedded deployment.

The Verdict

The decision comes down to three variables: your network quality, your language pair predictability, and your willingness to staff AI operations.

If your sites have reliable fiber and your language needs span many pairs, cloud APIs are the pragmatic starting point. Budget PHP 15,000–40,000 per month per 50 concurrent channels, invest in QoS before you invest in translation, and accept that accuracy on code-switched speech will be imperfect. You can deploy in weeks rather than months.

If you’re a large BPO or government agency with data sensitivity, capital for hardware, and engineers who can manage ML inference, embedded ASR gives you the best long-term accuracy, especially if you fine-tune on your own call recordings. Budget PHP 800,000–1.5 million in hardware upfront, plus at least one full-time ML engineer. Deployment takes 3–6 months to reach production quality.

If your operation handles a predictable set of 2–4 language pairs and you want better accuracy than generic cloud translation without the cost of full on-premise AI, regional language packs like Speechmatics’ bilingual offering split the difference. You’ll get meaningful improvement on the specific pairs covered, but you won’t solve the long-tail problem for Philippine regional languages.

None of these options perform well if your underlying VoIP infrastructure is unreliable. Philippine network latency affects every approach: cloud APIs suffer the most, embedded ASR the least, and regional packs fall somewhere in between. Before you evaluate any AI-powered VoIP translation product, run a week of latency and jitter measurements on every WAN link that will carry translated call traffic. If your numbers can’t support clean voice without AI, they certainly won’t support voice with AI layered on top.

The Philippine BPO industry, hospital systems, and government agencies that get this right will have a genuine competitive edge in multilingual service delivery. The organizations that buy a translation product and bolt it onto bad infrastructure will discover something worse than a language barrier: expensive silence on the line where words should be.